OpenCode

开源 AI 编程 Agent,有终端版、桌面 Beta 和编辑器扩展。本页 OpenCode 订阅教程就基于 Desktop 自定义提供商配置。

curl -fsSL https://opencode.ai/install | bash一个 OpenAI 兼容入口,统一接入 Claude Code、Codex CLI、CC Switch、OpenCode、OpenClaw、Hermes 等终端工具;其他兼容 OpenAI SDK 的客户端也可直接使用。

https://1a1api.top/v1https://api.1a1api.top/v1https://1a1api.tophttps://api.1a1api.topAuthorization: Bearer sk-...不同工具走不同的接口形态。先按下面四条路径找到你的入口,再跳转到具体章节。

OpenAI SDK / curl / 大多数兼容客户端,https://1a1api.top/v1 起步。

Claude Code / Anthropic SDK,走 Claude Messages root https://api.1a1api.top。

在 API 密钥列表点击“导入到 CCS”,自动按分组导入 Claude / Codex / Gemini provider。

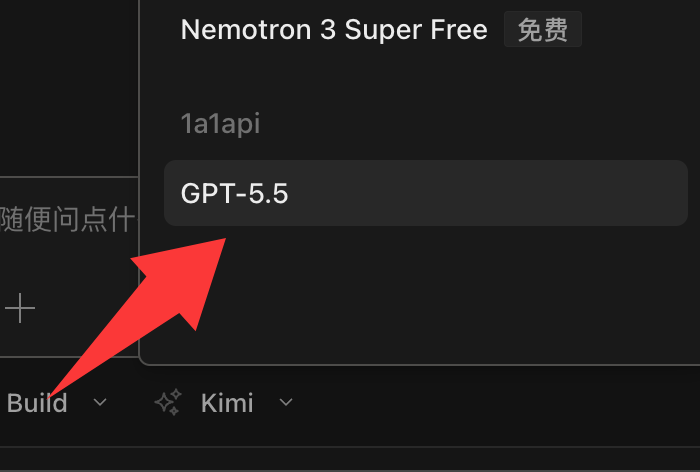

前往 CC Switch → 04 · OpenCode从 1A1API 创建密钥,到 OpenCode Desktop 添加自定义提供商,再选择 GPT-5.5 测试。

前往 OpenCode →这里放官方入口和项目 Releases。小白优先点“官方下载 / 官方文档”,需要指定系统安装包时再去 GitHub Releases。

开源 AI 编程 Agent,有终端版、桌面 Beta 和编辑器扩展。本页 OpenCode 订阅教程就基于 Desktop 自定义提供商配置。

curl -fsSL https://opencode.ai/install | bash统一管理 Claude Code、Codex、OpenCode、OpenClaw、Gemini CLI 和 Hermes Agent 的供应商、模型和切换流程。

安装后可在 1A1API API 密钥页点击“导入到 CCS”Claude 官方代码助手。桌面版适合可视化使用,CLI 适合终端用户和自动化脚本。

irm https://claude.ai/install.ps1 | iexOpenAI 的本地编码 Agent。CLI 适合长任务和工程执行,桌面 / ChatGPT 入口适合可视化使用。

npm install -g @openai/codex传统 OpenAI 兼容聊天接口。

适合 Codex、Claude Code、OpenCode。

长任务更稳,也更不容易超时。

三步跑通:创建 API Key、选对 Base URL、发起一次测试请求。

打开 1A1API 控制台,进入“API 密钥”页面,点击新建并复制 sk- 开头的 Key 自行保管。

OpenAI SDK 用 https://1a1api.top/v1;Codex / Responses 客户端用 root https://1a1api.top;Claude Code / Anthropic SDK 用 https://api.1a1api.top。

长文本、代码生成、Agent 任务建议启用 stream: true。

https://api.1a1api.top/v1 连接;部分地区访问该接口可能需要代理或翻墙。

curl https://1a1api.top/v1/chat/completions \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gpt-5.5",

"messages": [

{

"role": "user",

"content": "用一句话介绍 1A1API。"

}

],

"stream": true

}'curl https://1a1api.top/v1/responses \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gpt-5.5",

"input": "Reply with exactly: ok",

"stream": true

}'实际可用模型以你的 API Key 所属分组权限为准。

适合传统 OpenAI 兼容客户端,也可以调用被分组授权的 Claude 模型映射。

POST /v1/chat/completions

适合 Agent、Coding、长上下文和工具调用类客户端。

POST /v1/responses

适合 Claude SDK、Claude Code、CC Switch 的 Claude provider。

POST /v1/messages

适合图像生成和 Agent 工作流中的图片任务。

POST /v1/images/generations

Claude 模型有两条接入路线:普通程序优先走 OpenAI 兼容接口;Claude Code、CC Switch、Anthropic SDK 这类工具优先走 Claude Messages 接口。

先确认当前 API Key 的分组已经开通 Claude 模型。没有权限时通常会返回 403 或模型不存在。

OpenAI SDK 用 https://1a1api.top/v1;Claude Code / Anthropic SDK 用 https://api.1a1api.top。

先发一句 Reply with exactly: ok,确认能返回,再放进项目或 Agent 工作流里。

/v1;Claude Messages / Claude Code 通常写 root,不要手动追加 /v1/messages,客户端会自己拼端点。

export ANTHROPIC_API_KEY="sk-your-api-key"

curl https://api.1a1api.top/v1/messages \

-H "x-api-key: $ANTHROPIC_API_KEY" \

-H "anthropic-version: 2023-06-01" \

-H "content-type: application/json" \

-d '{

"model": "claude-sonnet-4-6",

"max_tokens": 256,

"messages": [

{

"role": "user",

"content": "Reply with exactly: ok"

}

]

}'export OPENAI_API_KEY="sk-your-api-key"

curl https://1a1api.top/v1/chat/completions \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "claude-sonnet-4-6",

"messages": [

{

"role": "user",

"content": "Reply with exactly: ok"

}

],

"stream": true

}'export ANTHROPIC_BASE_URL="https://api.1a1api.top"

export ANTHROPIC_AUTH_TOKEN="sk-your-api-key"

export ANTHROPIC_MODEL="claude-sonnet-4-6"

export ANTHROPIC_DEFAULT_OPUS_MODEL="claude-opus-4-7"

export ANTHROPIC_DEFAULT_SONNET_MODEL="claude-sonnet-4-6"

export ANTHROPIC_DEFAULT_HAIKU_MODEL="claude-haiku-4-5"

export CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1

claude可以,但前提是当前分组给这个模型做了 OpenAI 兼容映射。最稳的是先用上面的 curl 测试,成功后再放进 SDK。

api.1a1api.top 和 1a1api.top 怎么选?OpenAI SDK、Chat Completions、Images 优先用 https://1a1api.top/v1;Claude Code、Anthropic SDK、Claude Messages 优先用 https://api.1a1api.top。

/v1/v1 怎么办?把 Base URL 改回 root 或 /v1 中正确的那个。Claude Code 这类客户端通常不要在 Base URL 里写 /v1/messages。

下面按你在 Sub2API“使用 API 密钥”弹窗里实际添加的终端类型整理,普通用户可以直接按对应工具复制。

写入 ~/.codex/config.toml 和 ~/.codex/auth.json,走 OpenAI Responses。

在 Codex CLI 基础上启用 supports_websockets 与 responses_websockets_v2。

官方推荐用 ANTHROPIC_BASE_URL + ANTHROPIC_AUTH_TOKEN 接入第三方网关,不需要写 ~/.claude/config.toml。

把 1A1API 添加为 Claude / Codex provider,一键切换当前终端工具使用的模型。

写入 opencode.json,模型定义和 provider 一起配置。

合并到 ~/.openclaw/openclaw.json,不要覆盖已有配置。

写入 ~/.hermes/config.yaml 和 ~/.hermes/.env。

model_provider = "OpenAI"

model = "gpt-5.5"

review_model = "gpt-5.5"

model_reasoning_effort = "xhigh"

disable_response_storage = true

network_access = "enabled"

windows_wsl_setup_acknowledged = true

model_context_window = 1000000

model_auto_compact_token_limit = 900000

[model_providers.OpenAI]

name = "OpenAI"

base_url = "https://1a1api.top"

wire_api = "responses"

requires_openai_auth = true{

"OPENAI_API_KEY": "sk-your-api-key"

}model_reasoning_effort = "xhigh" 是 1A1API / Codex CLI 这类客户端识别的扩展配置项,并非所有官方 OpenAI SDK 的通用参数。直接拿到 OpenAI 官方 SDK 里调用普通模型时,标准取值通常只有 low、medium、high,xhigh 仅在 1A1API 中转 + 兼容客户端的组合下生效。

model_provider = "OpenAI"

model = "gpt-5.5"

review_model = "gpt-5.5"

model_reasoning_effort = "xhigh"

disable_response_storage = true

network_access = "enabled"

windows_wsl_setup_acknowledged = true

model_context_window = 1000000

model_auto_compact_token_limit = 900000

[model_providers.OpenAI]

name = "OpenAI"

base_url = "https://1a1api.top"

wire_api = "responses"

supports_websockets = true

requires_openai_auth = true

[features]

responses_websockets_v2 = true

Claude Code 官方对第三方网关的推荐做法是注入环境变量,而不是手写 ~/.claude/config.toml。下面给出 macOS / Linux 与 Windows 两种写法,任选一种放进 shell 配置即可。

export ANTHROPIC_BASE_URL="https://api.1a1api.top"

export ANTHROPIC_AUTH_TOKEN="sk-your-api-key"

export ANTHROPIC_MODEL="claude-sonnet-4-6"

export ANTHROPIC_DEFAULT_OPUS_MODEL="claude-opus-4-7"

export ANTHROPIC_DEFAULT_SONNET_MODEL="claude-sonnet-4-6"

export ANTHROPIC_DEFAULT_HAIKU_MODEL="claude-haiku-4-5"

export CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1

# 改完后 source ~/.zshrc 或重开终端,再运行

claude$env:ANTHROPIC_BASE_URL="https://api.1a1api.top"

$env:ANTHROPIC_AUTH_TOKEN="sk-your-api-key"

$env:ANTHROPIC_MODEL="claude-sonnet-4-6"

$env:ANTHROPIC_DEFAULT_OPUS_MODEL="claude-opus-4-7"

$env:ANTHROPIC_DEFAULT_SONNET_MODEL="claude-sonnet-4-6"

$env:ANTHROPIC_DEFAULT_HAIKU_MODEL="claude-haiku-4-5"

$env:CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC="1"

claude/v1/messages。Claude Code 会自己拼端点,所以 ANTHROPIC_BASE_URL 写到 https://api.1a1api.top 即可;写成 https://api.1a1api.top/v1 会出现 /v1/v1/messages 这类 404。

~/.claude/config.toml。那是第三方代理或 Codex 风格客户端的写法,官方 Claude Code CLI 并不读取这个文件,照抄会导致看似配置完成但实际还在调官方 Anthropic。如果你用的是 CC Switch 或 Claude Code Router 这类工具,它们会自己生成对应配置,请按它们的文档操作。

{

"provider": {

"openai": {

"options": {

"baseURL": "https://1a1api.top/v1",

"apiKey": "sk-your-api-key"

},

"models": {

"gpt-5.5": {

"name": "GPT-5.5",

"limit": {

"context": 1050000,

"output": 128000

},

"options": {

"store": false

},

"variants": {

"low": {},

"medium": {},

"high": {},

"xhigh": {}

}

},

"gpt-5.4": {

"name": "GPT-5.4",

"limit": {

"context": 1050000,

"output": 128000

},

"options": {

"store": false

},

"variants": {

"low": {},

"medium": {},

"high": {},

"xhigh": {}

}

},

"gpt-5.4-mini": {

"name": "GPT-5.4 Mini",

"limit": {

"context": 400000,

"output": 128000

},

"options": {

"store": false

},

"variants": {

"low": {},

"medium": {},

"high": {},

"xhigh": {}

}

},

"gpt-5.3-codex": {

"name": "GPT-5.3 Codex",

"limit": {

"context": 400000,

"output": 128000

},

"options": {

"store": false

},

"variants": {

"low": {},

"medium": {},

"high": {},

"xhigh": {}

}

}

}

}

},

"agent": {

"build": {

"options": {

"store": false

}

},

"plan": {

"options": {

"store": false

}

}

},

"$schema": "https://opencode.ai/config.json"

}{

"models": {

"mode": "merge",

"providers": {

"sub2api-openai": {

"baseUrl": "https://1a1api.top/v1",

"apiKey": "sk-your-api-key",

"api": "openai-completions",

"authHeader": true,

"models": [

{

"id": "gpt-5.5",

"name": "GPT-5.5",

"api": "openai-completions",

"reasoning": true,

"input": ["text", "image"],

"cost": {

"input": 0,

"output": 0,

"cacheRead": 0,

"cacheWrite": 0

},

"contextWindow": 272000,

"maxTokens": 128000

},

{

"id": "gpt-5.4",

"name": "GPT-5.4",

"api": "openai-completions",

"reasoning": true,

"input": ["text", "image"],

"cost": {

"input": 0,

"output": 0,

"cacheRead": 0,

"cacheWrite": 0

},

"contextWindow": 272000,

"maxTokens": 128000

},

{

"id": "gpt-5.3-codex",

"name": "GPT-5.3 Codex",

"api": "openai-completions",

"reasoning": true,

"input": ["text", "image"],

"cost": {

"input": 0,

"output": 0,

"cacheRead": 0,

"cacheWrite": 0

},

"contextWindow": 272000,

"maxTokens": 128000

}

]

}

}

},

"agents": {

"defaults": {

"model": {

"primary": "sub2api-openai/gpt-5.5"

}

}

}

}openclaw.json 已存在,只合并 models.providers 和 agents.defaults.models 相关片段,不要整文件覆盖。model:

default: "gpt-5.5"

provider: "custom"

base_url: "https://1a1api.top/v1"OPENAI_API_KEY="sk-your-api-key"import OpenAI from "openai";

const client = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

baseURL: "https://1a1api.top/v1",

});

const response = await client.chat.completions.create({

model: "gpt-5.5",

messages: [

{ role: "user", content: "Reply with exactly: ok" },

],

});

console.log(response.choices[0]?.message?.content);from openai import OpenAI

client = OpenAI(

api_key="sk-your-api-key",

base_url="https://1a1api.top/v1",

)

response = client.chat.completions.create(

model="gpt-5.5",

messages=[

{"role": "user", "content": "Reply with exactly: ok"}

],

)

print(response.choices[0].message.content)这份教程适合已经开通 1A1API 订阅或已有可用额度的用户,把 1A1API 模型接入 OpenCode Desktop 使用。照着做完后,就能在 OpenCode 里选择 1A1API 的模型对话和编程。

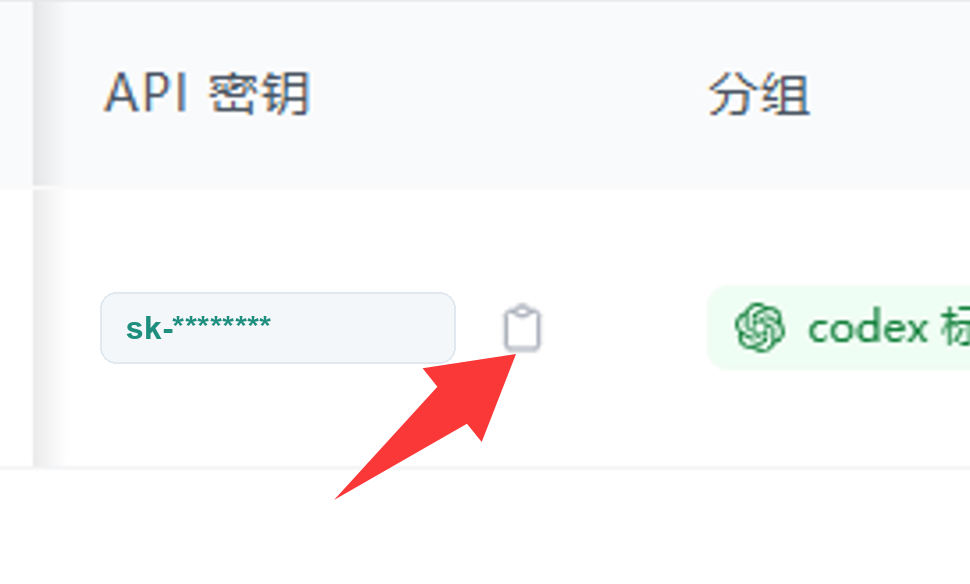

sk- 开头的 API 密钥。API 密钥只粘贴到 OpenCode 的 API 密钥输入框,不要发到群里、聊天窗口、公开截图或公开仓库。下面截图中的密钥已做脱敏处理。

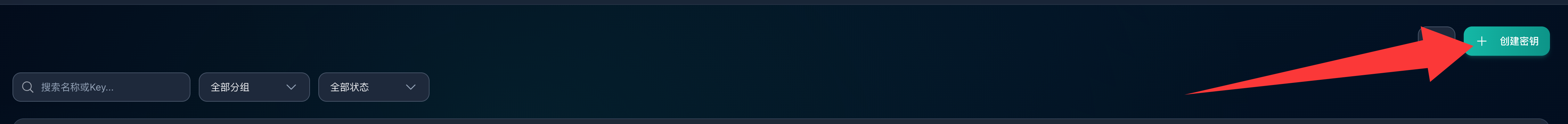

登录 1A1API 后台,在左侧菜单点击“API 密钥”,进入后点击右上角“+ 创建密钥”。创建完成后,在密钥列表里找到新密钥并点击复制按钮。

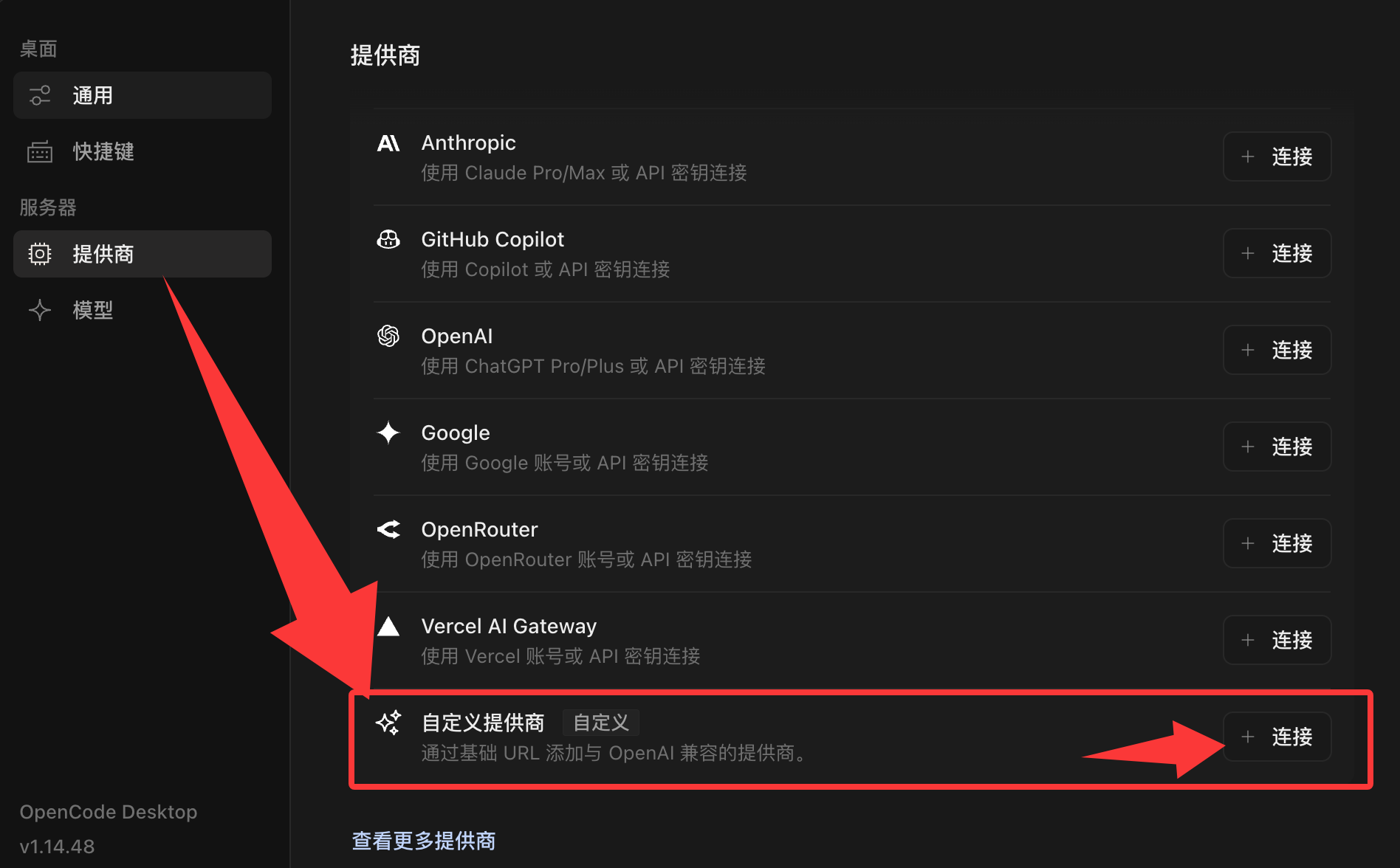

启动 OpenCode Desktop,在左下角点击齿轮图标进入设置。在“服务器”下进入“提供商”,找到“自定义提供商”,点击“+ 连接”。

提供商 ID 填 1a1api,显示名称填 1A1API,基础 URL 填 https://1a1api.top/v1,API 密钥粘贴刚才复制的 Key,模型填当前订阅可用模型。

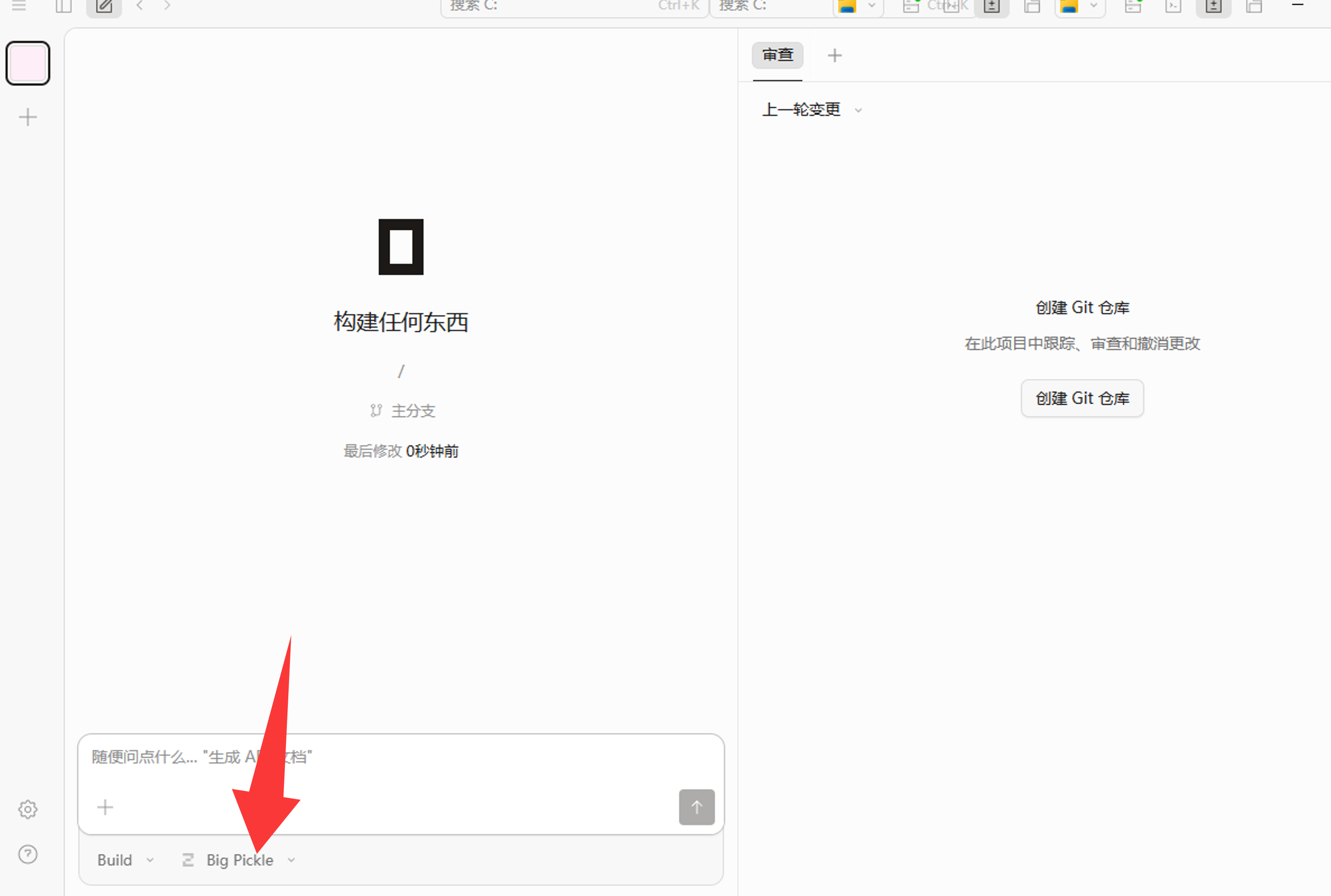

回到 OpenCode 主界面,在输入框附近点击模型选择器,找到 1a1api 分组,选择 GPT-5.5 或你的订阅模型。

1a1api1A1APIhttps://1a1api.top/v1sk-... 密钥。GPT-5.5 或 gpt-5.5。你好,请用一句话回复:我正在通过 1A1API 在 OpenCode 中工作。

登录后台后,在左侧菜单点击“API 密钥”。

在 API 密钥页面右上角创建新密钥。

密钥一般只显示部分内容,完整内容以复制结果为准。

在 OpenCode Desktop 左下角点击齿轮图标。

进入“服务器 → 提供商”,找到自定义提供商并点击连接。

按上方表格填写 ID、名称、基础 URL、API 密钥和模型。

点击输入框附近的模型选择器。

选择 GPT-5.5 或当前订阅可用的其他模型。

检查 API 密钥是否复制完整,是否粘贴到了“API 密钥”输入框,账号是否还有可用额度。

大多数情况是模型名称填错了。回到 1A1API 后台或套餐说明里确认模型 ID,注意大小写和连字符。

说明当前密钥、账号、分组或订阅额度可能已经用完,或者短时间请求太多。可以先等待一会儿再试,或检查 1A1API 后台额度。

回到“设置 → 服务器 → 提供商”确认 1A1API 配置还在;如果仍然不显示,可以重启 OpenCode Desktop 后再打开模型选择器。

本教程按截图填写 https://1a1api.top/v1。如果你的后台单独提供了其他 OpenAI 兼容地址,以后台当前显示为准,但末尾通常需要带 /v1。

1A1API / Sub2API 的“API 密钥”列表里有“导入到 CCS”按钮。它会根据这个密钥所属分组,自动生成 CC Switch 能识别的 ccswitch://v1/import 链接,比手动复制 Base URL、模型和 Key 更不容易填错。

先安装 CC Switch,再去 1A1API 控制台的“API 密钥”页面点击“导入到 CCS”。浏览器弹出“打开 CC Switch”时允许打开,回到 CC Switch 确认导入即可。

导入链接会带当前 sk-... Key、站点 API Base URL、provider 名称、客户端类型、用量查询脚本和 30 分钟自动刷新间隔。不要把带真实 Key 的链接发到公开聊天或仓库。

先确认系统已经安装 CC Switch,并且浏览器允许打开 ccswitch:// 这种本地协议链接。

进入“API 密钥”,确认这个 Key 是启用状态,并且分组对应你要用的工具:Claude Code、Codex、Gemini 或 Antigravity。

在密钥右侧操作区点击“导入到 CCS”。如果是 Antigravity 分组,会先让你选择导入为 Claude Code 还是 Gemini CLI。

CC Switch 打开后看清 provider 名称、应用类型、endpoint 和模型,再确认保存。不要把旧 provider 覆盖错。

Claude Code 问一句 Reply with ok;Codex 切换后建议重开终端;Gemini CLI 也先跑一个最短请求。

app=claude,用于 Claude Code。endpoint 使用后台公开 API Base URL 的 root 地址,不需要手写 /v1/messages。app=codex,用于 Codex CLI。当前 Sub2API 按钮会带默认模型 gpt-5.4;导入后可在 CC Switch 里改成你账号可用的 gpt-5.5 等模型。app=gemini。适合 Gemini CLI 或支持 Gemini provider 的工作流。/antigravity。/v1/usage 查询脚本,并设置 usageAutoInterval=30,方便 CC Switch 定时查看余额 / 剩余额度。resource=provider

app=claude / codex / gemini

name=站点名称,例如 1A1API

homepage=后台公开 API Base URL

endpoint=按密钥分组自动生成

apiKey=当前 API 密钥 sk-...

configFormat=json

usageEnabled=true

usageScript=内置 /v1/usage 用量查询脚本

usageAutoInterval=30

# 只有 OpenAI / Codex 分组会额外带:

model=gpt-5.4apiKey=sk-... 替换成占位符。

如果浏览器没有唤起 CC Switch,或管理员隐藏了“导入到 CCS”按钮,再用下面的手动方式。手动导入的关键仍然是:先看密钥分组,再选应用类型,最后验证真实请求。

在 1A1API 控制台复制 sk-...,同时看清这个 Key 的分组。不同分组对应的应用和 endpoint 不一样。

在 CC Switch 里点击添加供应商。Claude Code 选 Claude,Codex CLI 选 Codex,Gemini CLI 选 Gemini。

能拉到模型列表最好;拉不到也可以手动填模型 ID。保存后用 Stream Check / 健康检查确认 Key、模型、端点和流式响应正常。

https://api.1a1api.top;常用模型 claude-sonnet-4-6、claude-opus-4-7、claude-haiku-4-5。https://1a1api.top;模型可先用按钮默认的 gpt-5.4,也可改为你账号可用的 gpt-5.5。/antigravity。export OPENAI_API_KEY="sk-your-api-key"

# Codex / OpenAI 兼容模型列表

curl https://1a1api.top/v1/models \

-H "Authorization: Bearer $OPENAI_API_KEY"

# Claude 兼容模型列表;如果这里不支持模型发现,就在 CC Switch 里手动填写模型名

curl https://api.1a1api.top/v1/models \

-H "Authorization: Bearer $OPENAI_API_KEY"

# CC Switch 一键导入会使用类似这个用量接口

curl https://1a1api.top/v1/usage \

-H "Authorization: Bearer $OPENAI_API_KEY"Claude Code:

应用:Claude

供应商名称:1A1API Claude

API 格式:Anthropic Messages

Base URL:https://api.1a1api.top

API Key:sk-your-api-key

获取模型:点模型输入框旁边的下载 / 获取模型按钮

默认模型:claude-sonnet-4-6

验证:点击供应商卡片的健康检查 / Stream Check

Codex CLI:

应用:Codex

供应商名称:1A1API Codex

API 格式:OpenAI Responses

Base URL:https://1a1api.top

API Key:sk-your-api-key

获取模型:点模型输入框旁边的下载 / 获取模型按钮

默认模型:gpt-5.4 或 gpt-5.5

验证:启用后重开 Codex 终端# Claude provider 深度链接结构

ccswitch://v1/import?resource=provider&app=claude&name=1A1API&homepage=https%3A%2F%2Fapi.1a1api.top&endpoint=https%3A%2F%2Fapi.1a1api.top&apiKey=sk-your-api-key&configFormat=json&usageEnabled=true&usageScript=BASE64_USAGE_SCRIPT&usageAutoInterval=30

# Codex provider 深度链接结构

ccswitch://v1/import?resource=provider&app=codex&model=gpt-5.4&name=1A1API&homepage=https%3A%2F%2F1a1api.top&endpoint=https%3A%2F%2F1a1api.top&apiKey=sk-your-api-key&configFormat=json&usageEnabled=true&usageScript=BASE64_USAGE_SCRIPT&usageAutoInterval=30

# 安全建议:

# 1. 公开教程不要放真实 sk-... Key

# 2. 发给团队前先把 apiKey 参数清空或替换成占位符

# 3. 正常用户优先点后台“导入到 CCS”,不要手写这一长串通常是 CC Switch 未安装、系统没有注册 ccswitch:// 协议,或浏览器拦截了外部应用打开请求。先打开一次 CC Switch,再回浏览器重试;仍不行就用手动填写清单。

“导入到 CCS”按 API Key 所属分组判断应用类型。OpenAI 分组会导入 Codex,Anthropic / Claude 分组会导入 Claude。想换应用,先换密钥分组或创建对应分组的新密钥。

导入只代表配置写进去了;健康检查会真的发请求,确认 API Key、模型权限、端点、流式响应和网络都正常。

一键导入会带 /v1/usage 查询脚本。如果余额不显示,先确认 Key 仍启用、账号有权限访问用量接口、Base URL 没被浏览器或代理拦截。

名称:1A1API Claude

类型:Claude / Anthropic / Claude Code

Base URL:https://api.1a1api.top

API Key / Auth Token:sk-your-api-key

默认模型:claude-sonnet-4-6

Opus 模型:claude-opus-4-7

Sonnet 模型:claude-sonnet-4-6

Haiku 模型:claude-haiku-4-5

额外环境变量:CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC=1名称:1A1API Codex

类型:OpenAI / Responses / Codex

Base URL:https://1a1api.top

API Key:sk-your-api-key

模型:gpt-5.4 或 gpt-5.5

Review 模型:gpt-5.4 或 gpt-5.5

Reasoning Effort:xhigh

Wire API:responses

disable_response_storage:true

network_access:enabled# CC Switch 切换后,建议核对这两个文件是否同步

sed -n '1,80p' ~/.codex/config.toml

sed -n '1,40p' ~/.codex/auth.json

# 重点看:

# 1. model_provider 是否为 OpenAI 或你在 CC Switch 里设置的 provider 名

# 2. base_url 是否为 https://1a1api.top

# 3. auth.json 是否写入当前 1A1API 的 sk-... Key

# 4. 已经打开的 codex 窗口不要指望热切换,重开最稳先确认 CC Switch 里填的是 1A1API 的 sk-...,不是旧中转、官方 Key 或过期 Key;再检查 Codex 的 auth.json 是否真的同步。

多数是 Base URL 写错。Claude provider 用 https://api.1a1api.top,不要写成 https://api.1a1api.top/v1/messages。

Codex 不一定热读取配置。先看 ~/.codex/config.toml 是否变化,再重开 Codex。只看正在运行的旧窗口,容易误判。

如果用户不熟悉 API、密钥和客户端配置,建议先看图文教程,再回到本页复制终端配置。

包含注册、创建 API 密钥、复制配置、查看用量等面向新手的操作流程。

分组决定当前 API 密钥可用的模型、额度规则和调用能力。

余额按实际调用扣费,订阅按套餐规则提供每日额度或周期额度。日志和面板里两者可能分开统计。

具体的分组、套餐额度、计费倍率以控制台实时展示为准;本页不列具体价格,避免和实际不同步。需要对比时直接到控制台查看。

日志能帮助你判断一次请求走了哪个分组、用了多少 token、花了多少钱、慢在哪里。

541.1K 这种大数字通常表示缓存读取或上下文缓存相关 token,不一定代表本次新输入了 54 万 token。判断贵不贵,优先看“费用”字段。

控制账号是否参与 /responses/compact 调度。

stream: true。API Key 写错、缺少 Bearer、Key 被删除或禁用。检查 Authorization: Bearer sk-your-api-key。

分组无权调用模型、余额不足、订阅过期、分组未开启对应调度能力。

Base URL 写错、客户端重复拼接 /v1、端点不存在。

请求过快、并发过高或当前服务繁忙。建议降低并发、稍后重试;如果持续出现,请把报错时间、模型和 API Key 名称发给客服处理。

请求很久没有流式返回。优先开启流式、拆分任务;如大型项目、大图生成仍遇到 CF 报错,可尝试把 Base URL 改为 https://api.1a1api.top/v1,部分地区可能需要代理或翻墙。

首 token 是模型开始输出前的等待时间。若明显变慢,请先检查上下文是否过长或已经爆上下文;可以新开会话、减少历史记录、压缩日志、拆分文件或降低一次性输入内容后再试。